Defining Key Performance Indicators (KPIs)

A KPI is a quantifiable measure of an SUT's performance over time for a specific objective. KPIs provide targets for your team to shoot for and milestones to gauge progress. They also help you identify and investigate potential problems with the SUT.

You can use record() to capture performance indicators and other metrics that are not part of the coverage model, such as the name and version strings identifying the SUT or the maximum speed of the SUT during a test. In contrast to coverage metrics, performance metrics are typically raw data and thus require either user interpretation or a user-defined formula for grading.

record() simply captures and stores data. You must use it together with other OSC2 code that defines the expression whose value you want to capture. Some examples shown below include:

- Custom metrics use various OSC2 constructs to collect and manipulate the data to be recorded.

- Global metrics are attributes that are defined in top.info and collected for every run in a test suite, such as simulator and SUT version.

Example: collect number of emergency braking occurrences

For example, you might want to collect the number of times the SUT performed emergency braking.

extend top.info:

emergency_brakes_threshold: acceleration

event emergency_brake is @top.clk if sut.car.prev_state != null and\

sut.car.prev_state.local_acceleration != null and\

sut.car.prev_state.local_acceleration.lon > emergency_brakes_threshold and\

sut.car.state.local_acceleration.lon <= emergency_brakes_threshold

var num_of_hard_brakes: int = sample(num_of_hard_brakes + 1, @emergency_brake)

record(num_of_hard_brakes, text: "Number of instances with hard braking")

Add global metrics

Global metrics are attributes that are collected for every run in a test suite. They help you analyze the test suite by providing specific global information, such as simulator and map versions. Foretify records the global metrics for each run, and once your runs are uploaded to Foretify Manager, you can access and analyze the global metrics. Following are some ways to use global metrics to analyze a test suite:

- Filtering runs with a specific software version

- Filtering runs with a specific map version

- Creating statistics on which maps are being used

Along with the predefined global metrics that Foretify collects, you can create your own global metrics. For example, you might want to:

- Version your driver and vehicle software separately. (The predefined SUT version only supports one SUT_version per run.) In this case, you can add an additional global metric, vehicle_sw_version.

- Document optional checks to see which have been loaded for which runs.

- Change the content of existing global metrics, such as adapting the SUT version.

Define the variables for global metrics

Define global metrics by extending one of the following structs:

- run_info : Should contain run-related data (for example, seed or run folder, etc.).

- sim_info: Should contain simulator-related data (for example, simulator name or version, etc.).

- model_info: Should contain verification model information (for example, SUT version or Foretify version, etc.).

- frun_info: Should contain FRun-related data (currently not in use).

You can extend any of these structs to create global metrics, as shown in the following example.

extend sim_info:

var vehicle_sw_version: string

Note

See Global metrics (records) for more information on these structs and the global attributes defined in them.

Record global metrics

You use record() to record the variables that will then become global metrics. You can reference the metric you have added using one of the following expressions:

g_info.run.<my-global-metric>

g_info.sim.<my-global-metric>

g_info.model.<my-global-metric>

g_info.frun.<my-global-metric>

The following example shows how to record the variable defined in the previous example in order to generate a global metric:

extend top.info:

event user_metrics is @end

record(expression: g_info.sim.vehicle_sw_version, name: vehicle_sw_version, event: user_metrics)

Set the value for a global metric

In order to record a variable used to generate global metrics, you need to store data in it using one of the following methods:

-

Manually set the variable in OSC2:

OSC2 code: set global metric valueextend sim_info: set vehicle_sw_version = "1.0.1"You can set the variable to any existing variable in the system.

-

Set the variable using the --set invocation option, for example:

Set global metric value when invoking Foretify$ foretify --set "top.g_info.sim.vehicle_sw_version=\"1.0.1\"" ...Using this method allows you to extract existing metadata to provide to Foretify. Also, this method allows you to override any value set in the OSC2 code.

Other methods exist, for example, setting the global metric from an orchestrator.

Global metrics

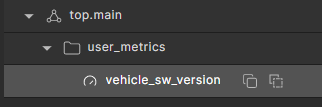

Once you have created, set, and recorded your global metric in your test suite, you can upload the test suite to Foretify Manager. You can then add it to a workspace or create a new workspace containing the test suite. The global metric appears in the VPlan under the event used to record it, user_metrics, in the case of the example above:

To learn more, see global metrics in the Foretify Manager documentation.

Example global metric

This example defines a global metric vehicle_sw_version.

| OSC2 code: define, set and log a global metric | |

|---|---|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 | |

- Line 7 defines the variable for the vehicle_sw_version metric.

- Line 8 sets the value of the metric.

- Line 11 defines an event when the metric is collected.

- Line 12 records the metric when the event occurs.

- Line 17 prints the value of the metric to the log file.

Foretify> run

Checking license...

...

Starting the test ...

Running the test ...

Loading configuration ... done.

[0.000] [MAIN] Executing plan for top.all@1 (top__all)

[0.000] [PLANNER] Planned scenario time: 14.74 seconds

[9.740] [MAIN] Vehicle SW version is 1.0.1.

[14.740] [MAIN] Ending the run

[14.740] [MAIN]

[14.740] [MAIN] --------------------------------------------------------------------------------

[14.740] [MAIN] Plan tries: 1, num of sut_errors: 0, Root: top.all@1 (top__all), Seed: 1

[14.740] [MAIN] Main issue: none

Run completed